UK Should Develop Sodium-Ion Battery Technology for Energy Transition

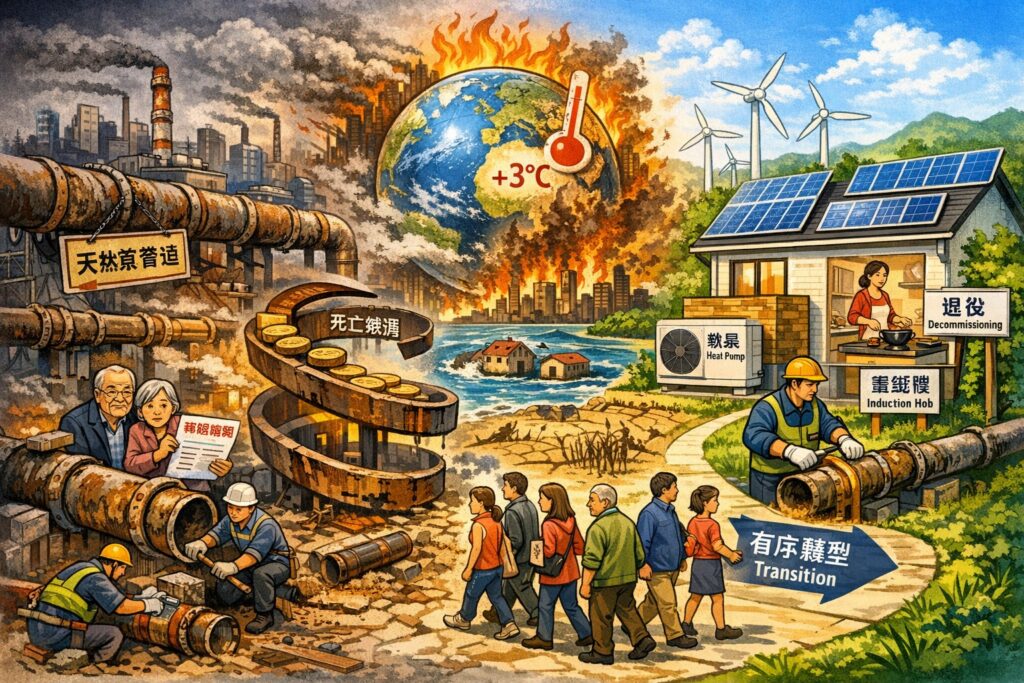

Beneath the grand slogan of net zero, the UK is being pushed towards a deeper and harsher transformation: from a nation reliant on burning fossil fuels to one that operates fundamentally on electricity. Heating, transportation, industrial processes, and even national security and geopolitics will increasingly depend on the stability, affordability, and autonomy of the electricity system. In this new electric nation, batteries are no longer merely components for electric vehicles or smartphones; they are critical infrastructure on par with the power grid itself.

The problem is that the UK has almost lost its leading position in the lithium battery race. Whether it is NMC or LFP, the industry focus has shifted entirely towards China, from mineral sourcing and material processing to manufacturing technology and large-scale production. This is a reality that cannot be reversed simply by a few sentences in policy documents about ‘reviving manufacturing.’ Even though the UK has recently begun to discuss a domestic battery industry, it is largely a defensive measure rather than a genuine leadership initiative. If the UK’s energy transition remains entirely based on lithium battery systems, its reliance on external supply chains will remain structural in terms of energy security and industrial autonomy.

However, technology never pauses for any nation. Sodium-ion batteries represent a rapidly evolving technology that has not yet been monopolized by any single country, with both its value and limitations clearly defined. Unlike lithium, sodium is abundant in the Earth’s crust, with a dispersed supply that does not involve the highly concentrated strategic minerals such as lithium, cobalt, and nickel. This gives sodium-ion batteries a structural advantage in terms of supply security, long-term costs, and geopolitical risks. Additionally, their chemical properties provide higher thermal stability, which translates to lower fire risks and more flexible site selection for grid-scale applications. However, sodium-ion batteries are not without their drawbacks. Their energy density is still lower than that of lithium batteries, requiring more materials and larger volumes for the same energy storage capacity, making it difficult to replace lithium batteries in weight-sensitive scenarios like long-range electric vehicles. Moreover, the industry scale is not yet fully mature, and short-term costs may not be lower than those of the highly commoditized LFP. Therefore, the most competitive applications for sodium-ion batteries lie not in pursuing extreme range, but in grid-scale energy storage, industrial backup, and balancing renewable energy systems—precisely the most vulnerable yet crucial aspect of the UK’s energy transition.

This is particularly important for the UK. The UK electricity system heavily relies on wind energy, while winter is precisely when solar energy is weakest and weather is most unstable. In the event of several consecutive days without wind and little sunlight, the electricity system would come under immense pressure. Sodium-ion batteries have already demonstrated practical feasibility in medium to long-term energy storage over hours to days. When combined with pumped storage, hydrogen, or other long-duration energy storage technologies, sodium-ion batteries could serve as a crucial buffer for the grid, significantly enhancing the UK’s energy resilience in winter and reducing dependence on natural gas and imported electricity.

On this path, the UK is not starting from scratch. Faradion, an early pioneer in sodium-ion batteries, was born in the UK, and the core knowledge and patents remain deeply embedded in the UK’s research system. The Faraday Institution, as a national battery research hub, has incorporated ‘post-lithium’ into its long-term research agenda, and several top universities have accumulated leading results in sodium-based materials, electrode design, and system integration. Compared to the capital-intensive, volume-driven lithium battery industry, sodium-ion technology relies more on fundamental research and engineering integration capabilities, which is a relative strength of the UK.

Thus, what the UK truly needs is a clear-eyed and pragmatic dual-track strategy. Lithium batteries will remain the mainstream for electric vehicles over the next decade, and the UK must continue to invest to ensure that its automotive industry and related supply chains are not marginalized. At the same time, it should clearly position sodium-ion batteries as a strategic technology for energy security and grid transformation, accelerating the entire chain from research and demonstration to actual deployment, particularly in grid storage and industrial applications.

In fact, this direction is not starting anew. Whether it is the national battery strategy, critical minerals strategy, or official research on long-duration storage, recent government documents have repeatedly emphasized the importance of technological diversification and supply chain resilience. Sodium-ion batteries sit at the intersection of these policies, representing an option that aligns with energy security logic and possesses industrial potential.

If the UK genuinely wishes to play a leadership role in the net-zero era, the key lies not only in installing the most wind turbines or solar panels but in mastering the core technologies that allow the electricity system to function even under the worst conditions. Sodium-ion batteries provide a realistic and rare window: a critical technology that has not yet been fully monopolized and is highly compatible with the UK’s energy structure. Missing out on lithium was a matter of structure and timing; if the UK misses out on sodium-ion technology, it will not be fate but a choice.

UK Should Develop Sodium-Ion Battery Technology for Energy Transition Read More »